Mongo vs Postgres as simple JSON object store

We needed a JSON document store for a microservice. The documents are relatively large, about 1MB, and they should be retrieved by id. Mongo and Postgres are the obvious candidates… Read more »

We needed a JSON document store for a microservice. The documents are relatively large, about 1MB, and they should be retrieved by id. Mongo and Postgres are the obvious candidates… Read more »

We have been happily using Postgres-BDR for years as a multi-master database for fault-tolerant applications – it’s been incredibly robust for us. The advantage of a multi-master database is that… Read more »

When we’re creating an API with fault-tolerance, to reduce the number of single points of failure, we often use Postgres BDR, a multi-master database, which allows us to provide two… Read more »

Using VCenter’s migration over the internet to a remote data-center is often too slow for practical purposes – a 200GB VM may take several hours to move and that may… Read more »

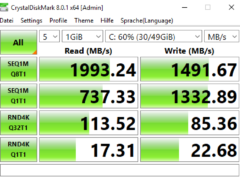

Here are some disk performance measurements (made on a Windows 10 VM on vSphere 6.5 with CrystalDiskMark) across different types of datastore (local SSD array, NFS (sync), NFS (async) and… Read more »

Sometimes you need to block access to a specific ip address for test purposes – for instance to simulate unavailability of a web-service. Here’s how to do it on macOS… Read more »

If you have created a container without specifying a volume, Docker will create one for you. If you later decide you’d rather have the container’s data somewhere else, you can… Read more »

Postgres-BDR is an excellent multi-master replication clustering extension for PostgreSQL (https://www.2ndquadrant.com/en/resources/postgres-bdr-2ndquadrant/). We often use it for fault tolerant systems – its asynchronous and uncomplicated to set up (at least if… Read more »

Current plans for contact-tracing are based around mobile phones, because these have built-in capabilities for location tracking and contact detection. An alternative to mobile phones for contact-tracing would be a… Read more »

Each contact you have with another person potentially infects you with a virus. If the person you have contact with later tests positive for that virus, the contact you had… Read more »